This tutorial is one part of a series of tutorials about generating procedural meshes. See here for an outline.

Vertices

So far I used simple vertices with only information about position in it. The vertex buffer consisted of an array of

Vector3 structs. As I mentioned earlier, vertices can be more complex objects, holding further information about color, normals, texture coordinates and so on. In previous tutorials I used the pixel shader and hardcoded the color of the the pixel of an object:

float4 PShader(float4 position : SV_POSITION) : SV_Target

{

return float4(0.0f, 1.0f, 0.0f, 1.0f);

}

This simple pixel shader just colors every of an object lime green, as the first three values of the

float4 struct correspond to the RGB color model (standing for red, greed, blue).

First, we need a vertex structure, that can hold additional information about color:

[StructLayout(LayoutKind.Sequential)]

public struct Vertex

{

public Vector3 Position;

public int Color;

public Vertex(Vector3 position, int color)

{

this.Position = position;

this.Color = color;

}

}

From here on we need to create a DataStream and write new vertices to this stream like in this statement:

vertices.Write(new Vertex(new Vector3(1.0f, 1.0f, 1.0f), Color.FromArgb(255, 0, 0).ToArgb()));

We create a new vertex at position x = 1, y = 1 and z = 1 and we tell the

Color struct that we want the color red.

We are not done yet. The vertex buffer is just a stream of bytes and we need to tell our device how to interpret this data. This is exactly was the

InputLayout is made for.

In previous tutorials I used this

InputLayout:

var elements = new[] { new InputElement("POSITION", 0, Format.R32G32B32_Float, 0) };

layout = new InputLayout(DeviceManager.Instance.device, inputSignature, elements);

The

InputLayout needs an array of

InputElements. The

InputElement array so far just consisted of the one element defined above, holding only information about the position. So we need to add a further

InputElement for color:

var elements = new[] {

new InputElement("POSITION", 0, Format.R32G32B32_Float, 0),

new InputElement("COLOR", 0, Format.B8G8R8A8_UNorm, 12, 0)

};

layout = new InputLayout(DeviceManager.Instance.device, inputSignature, elements);

The second

InputElement for color also gives information about its offset from the beginning of

InputElement structure. As the first

InputElement consists of three floats and one float is four bytes big, the color

InputElement starts at byte 12.

Just like before, we need to set the input layout in the device before making the draw call:

DeviceManager.Instance.context.InputAssembler.InputLayout = layout;

We also have to adjust the shader, but I will come to this later. First let us create some geometry to render.

Color Cube

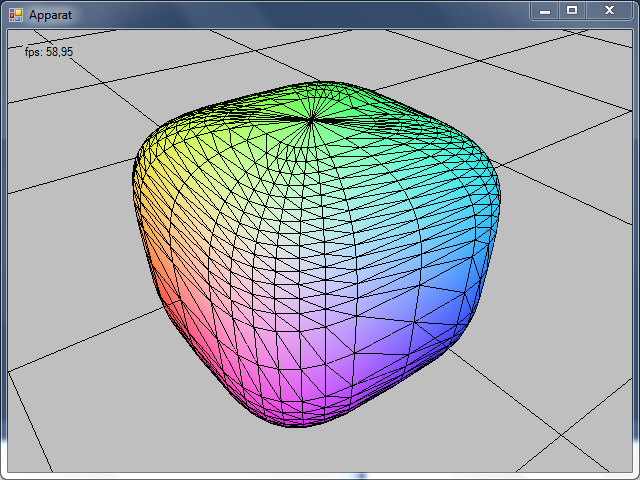

I will use the color cube as an example and this is what we are aiming at:

The cube consists of 8 vertices and each has a different color. Pixels that lie on the surface of the cube are being interpolated according to their position in the corresponding triangle.

I define the vertices of the cube, so that the center of the cube corresponds with the origin of its local coordinate system. Shorter: the center of the cube is (0,0,0).

In the center of the cube is the coordinate frame. A widely used color scheme is to map the axis to RGB color model: x-axis: red, y-axis: green, z-axis: blue. So what is up with those plusses and minusses? In order to keep the graphic clear, I omitted the values of the positions and depicted only the signs of the vector elements. Take a look at the x-axis: every vertex of the cube that lies in the positive x-axis, has a plus sign (all vertices on the right) and every vertex in the negative x-axis (all vertices on the left) have a negative sign.

And what is the purpose of this? If I have a negative sign at the position element (x,y or z), I set the corresponding color element (R,G or B) value to zero and if I have a positive sign, I set the color element to 255. This

is how I fill the vertex buffer and I colored the corresponding values green and red, to make this pattern more visible:

Now that we have set up the vertex buffer it is time to set up the index buffer. This picture depicts the order in which I have defined the vertices:

The sequence of vertex definitions is completely arbitrary, but once we have defined the vertices we need to stay consistent with this definition to get the triangles rendered in a right way. The default way DirectX handles triangle definition is by enumerating the vertices clockwise. If you are looking at a particular side, you have to enumerate the indices in the right order:

Look at the picture above and look at the case when looking straight at the top of the cube. We have indices 0,1,2 and 3. The triangulation I chose is: (0,1,2) and (2,3,0). This is also arbitrary as you also could triangulate this side with (3,0,1) and (1,2,3). As long as you enumerate the indices in a clockwise order you get a valid triangulation.

I fill the index buffer corresponding to the picture above:

// Cube has 6 sides: top, bottom, left, right, front, back

// top

indices.WriteRange(new short[] { 0, 1, 2 });

indices.WriteRange(new short[] { 2, 3, 0 });

// right

indices.WriteRange(new short[] { 0, 5, 6 });

indices.WriteRange(new short[] { 6, 1, 0 });

// left

indices.WriteRange(new short[] { 2, 7, 4 });

indices.WriteRange(new short[] { 4, 3, 2 });

// front

indices.WriteRange(new short[] { 1, 6, 7 });

indices.WriteRange(new short[] { 7, 2, 1 });

// back

indices.WriteRange(new short[] { 3, 4, 5 });

indices.WriteRange(new short[] { 5, 0, 3 });

// bottom

indices.WriteRange(new short[] { 6, 5, 4 });

indices.WriteRange(new short[] { 4, 7, 6 });

Source Code

Putting everything together, this is the complete source code for the ColorCube Renderable:

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

using System.Drawing;

using SlimDX.D3DCompiler;

using SlimDX;

using SlimDX.Direct3D11;

using SlimDX.DXGI;

using System.Runtime.InteropServices;

namespace Apparat.Renderables

{

public class ColorCube : Renderable

{

ShaderSignature inputSignature;

EffectTechnique technique;

EffectPass pass;

Effect effect;

InputLayout layout;

SlimDX.Direct3D11.Buffer vertexBuffer;

SlimDX.Direct3D11.Buffer indexBuffer;

DataStream vertices;

DataStream indices;

int vertexStride = 0;

int numVertices = 0;

int indexStride = 0;

int numIndices = 0;

int vertexBufferSizeInBytes = 0;

int indexBufferSizeInBytes = 0;

EffectMatrixVariable tmat;

[StructLayout(LayoutKind.Sequential)]

public struct Vertex

{

public Vector3 Position;

public int Color;

public Vertex(Vector3 position, int color)

{

this.Position = position;

this.Color = color;

}

}

public ColorCube()

{

try

{

using (ShaderBytecode effectByteCode = ShaderBytecode.CompileFromFile(

"Shaders/colorEffect.fx",

"Render",

"fx_5_0",

ShaderFlags.EnableStrictness,

EffectFlags.None))

{

effect = new Effect(DeviceManager.Instance.device, effectByteCode);

technique = effect.GetTechniqueByIndex(0);

pass = technique.GetPassByIndex(0);

inputSignature = pass.Description.Signature;

}

}

catch (Exception ex)

{

Console.WriteLine(ex.ToString());

}

var elements = new[] {

new InputElement("POSITION", 0, Format.R32G32B32_Float, 0),

new InputElement("COLOR", 0, Format.B8G8R8A8_UNorm, 12, 0)

};

layout = new InputLayout(DeviceManager.Instance.device, inputSignature, elements);

tmat = effect.GetVariableByName("gWVP").AsMatrix();

// half length of an edge

float offset = 0.5f;

vertexStride = Marshal.SizeOf(typeof(Vertex)); // 16 bytes

numVertices = 8;

vertexBufferSizeInBytes = vertexStride * numVertices;

vertices = new DataStream(vertexBufferSizeInBytes, true, true);

vertices.Write(new Vertex(new Vector3(+offset, +offset, +offset), Color.FromArgb(255, 255, 255).ToArgb())); // 0

vertices.Write(new Vertex(new Vector3(+offset, +offset, -offset), Color.FromArgb(255, 255, 000).ToArgb())); // 1

vertices.Write(new Vertex(new Vector3(-offset, +offset, -offset), Color.FromArgb(000, 255, 000).ToArgb())); // 2

vertices.Write(new Vertex(new Vector3(-offset, +offset, +offset), Color.FromArgb(000, 255, 255).ToArgb())); // 3

vertices.Write(new Vertex(new Vector3(-offset, -offset, +offset), Color.FromArgb(000, 000, 255).ToArgb())); // 4

vertices.Write(new Vertex(new Vector3(+offset, -offset, +offset), Color.FromArgb(255, 000, 255).ToArgb())); // 5

vertices.Write(new Vertex(new Vector3(+offset, -offset, -offset), Color.FromArgb(255, 000, 000).ToArgb())); // 6

vertices.Write(new Vertex(new Vector3(-offset, -offset, -offset), Color.FromArgb(000, 000, 000).ToArgb())); // 7

vertices.Position = 0;

vertexBuffer = new SlimDX.Direct3D11.Buffer(

DeviceManager.Instance.device,

vertices,

vertexBufferSizeInBytes,

ResourceUsage.Default,

BindFlags.VertexBuffer,

CpuAccessFlags.None,

ResourceOptionFlags.None,

0);

numIndices = 36;

indexStride = Marshal.SizeOf(typeof(short)); // 2 bytes

indexBufferSizeInBytes = numIndices * indexStride;

indices = new DataStream(indexBufferSizeInBytes, true, true);

// Cube has 6 sides: top, bottom, left, right, front, back

// top

indices.WriteRange(new short[] { 0, 1, 2 });

indices.WriteRange(new short[] { 2, 3, 0 });

// right

indices.WriteRange(new short[] { 0, 5, 6 });

indices.WriteRange(new short[] { 6, 1, 0 });

// left

indices.WriteRange(new short[] { 2, 7, 4 });

indices.WriteRange(new short[] { 4, 3, 2 });

// front

indices.WriteRange(new short[] { 1, 6, 7 });

indices.WriteRange(new short[] { 7, 2, 1 });

// back

indices.WriteRange(new short[] { 3, 4, 5 });

indices.WriteRange(new short[] { 5, 0, 3 });

// bottom

indices.WriteRange(new short[] { 6, 5, 4 });

indices.WriteRange(new short[] { 4, 7, 6 });

indices.Position = 0;

indexBuffer = new SlimDX.Direct3D11.Buffer(

DeviceManager.Instance.device,

indices,

indexBufferSizeInBytes,

ResourceUsage.Default,

BindFlags.IndexBuffer,

CpuAccessFlags.None,

ResourceOptionFlags.None,

0);

}

public override void render()

{

Matrix ViewPerspective = CameraManager.Instance.ViewPerspective;

tmat.SetMatrix(ViewPerspective);

DeviceManager.Instance.context.InputAssembler.InputLayout = layout;

DeviceManager.Instance.context.InputAssembler.PrimitiveTopology = PrimitiveTopology.TriangleList;

DeviceManager.Instance.context.InputAssembler.SetVertexBuffers(0, new VertexBufferBinding(vertexBuffer, vertexStride, 0));

DeviceManager.Instance.context.InputAssembler.SetIndexBuffer(indexBuffer, Format.R16_UInt, 0);

technique = effect.GetTechniqueByName("Render");

EffectTechniqueDescription techDesc;

techDesc = technique.Description;

for (int p = 0; p < techDesc.PassCount; ++p)

{

technique.GetPassByIndex(p).Apply(DeviceManager.Instance.context);

DeviceManager.Instance.context.DrawIndexed(numIndices, 0, 0);

}

}

public override void dispose()

{

effect.Dispose();

inputSignature.Dispose();

vertexBuffer.Dispose();

layout.Dispose();

}

}

}

Shader

Like I mentioned above, we also have to modify our shader in order to render the color of a vertex:

matrix gWVP;

struct VOut

{

float4 position : SV_POSITION;

float4 color : COLOR;

};

VOut VShader(float4 position : POSITION, float4 color : COLOR)

{

VOut output;

output.position = mul( position, gWVP);

output.color = color;

return output;

}

float4 PShader(float4 position : SV_POSITION, float4 color : COLOR) : SV_TARGET

{

return color;

}

RasterizerState WireframeState

{

FillMode = Wireframe;

CullMode = None;

FrontCounterClockwise = false;

};

technique10 Render

{

pass P0

{

SetVertexShader( CompileShader( vs_4_0, VShader() ));

SetGeometryShader( NULL );

SetPixelShader( CompileShader( ps_4_0, PShader() ));

//SetRasterizerState(WireframeState);

}

}

Not much going on in the vertex shader

VShader. The position of the vertex is multiplied with the WorldViewPerspective matrix from our camera to transform it to the right screen position and the color of the vertex is just handed through to the output of the shader.

Well, something is new. Take a look at the vertex shaders used in previous tutorials:

float4 VShader(float4 position : POSITION) : SV_POSITION

{

return mul( position, gWVP);

}

This shader performed the above mentioned transformation from the local coordinate system of the model to the screen space and it returned a

float4 structure.

Compare this to the new vertex shader. This has as output a new defined struct called

VOut. To be able to hand down the color information of the vertex to the pixel shader, we need to have a structure, that also holds the color information.

Result

Now we can render vertices with color and get a nice color cube:

In the next tutorial I will show how to render a wireframe over this colored cube. If you download the code and play around with this example, you will notice that the grid will not be rendered over the cube even if it is in between the camera and the cube. This is because we haven't set up a depth buffer by now and this will be addressed in a further tutorial.

You can download the source code to this tutorial

here.